This website belongs to my PhD project, the main point of which was that cognitive models of rhythm perception were too rigid and inflexible to account for the flexibility and plasticity of rhythm perception, due to which an individual’s rhythm perception should be seen as a function of their extensive exposure to music, active participation in cultural activities, and other experience and practice that have shaped them.

Here, I’m collecting code, materials, and random tidbits related to this work.

You might also be interested in

While trying to integrate my rhythm modelling efforts into IDyOM, I realised that the problem could be solved more generally.

The result is Jackdaw, a library for specifying dynamic Bayesian networks.

Jackdaw allows you to specify these models compactly in Common Lisp code.

The flexibility of this framework derives from a type of deterministic constraints that can be encoded jackdaw models which I have dubbed congruency constraints.

Congruency constraints are explained in chapter three of my thesis.

Jackdaw can be found here.

An introductory tutorial can be found here.

A few example implementations of various models can be found in the examples directory of the tutorial.

The examples are self-contained given a working jackdaw installation.

In our 2017 Frontiers paper, we presented a proof-of-concept “simulation of enculturation” obtained by training a probabilistic generative model on folksongs from different cultures and cross-evaluating the model on test data from the respective cultures.

The paper contains a few scatter plots that visualize a kind of enculturation space in which the position of each rhythm encodes how much it aligns with the biases of one of the two cultures.

In the paper, these figures are necessarily somewhat small, causing a lot of information to be obsured.

Also, it is not possible to see which actual rhythm corresponds to each datapoint.

A few years ago, I used a nifty libary called mpld3, which allows you to generate interactive plots with the matplotlib API, to create an interactive version of these scatter plots.

Below you can find an interactive version of Figure 4A of the paper.

Hovering over each datapoint should reveal a score rendition of the rhythm it represents.

The little gray buttons in the bottom-left corner can be used for navigation (try zooming in!).

Note that the melodic aspects of these scores were ignored in our simulations; only the rhythms mattered.

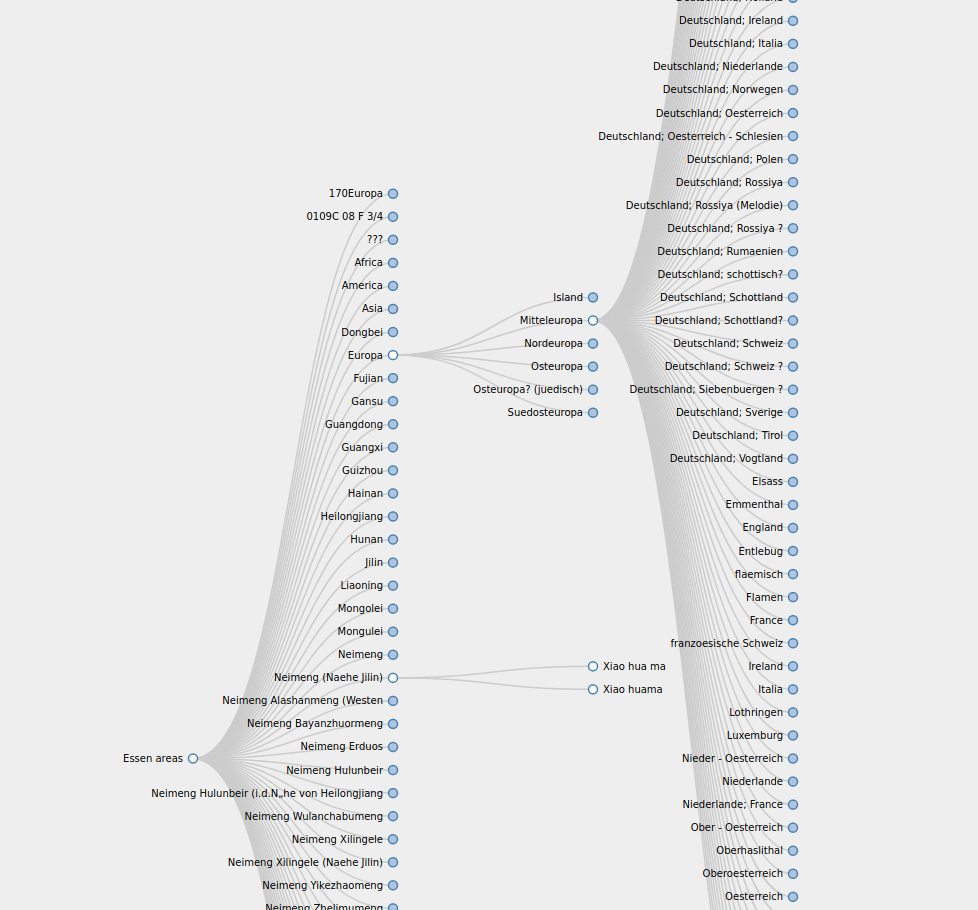

The Essen folksong collection is an intriguing relic of computational musicology. While its are surrounded by an air of mystery [1], it’s used widely as an empirical source of symbolically encoded folk melodies.

Along with each melody, some information on its likely geographical region of origin is provided.

This information, though meticulous, is a bit tangly.

A couple of years ago, I found myself craving for a visual overview of the contents of this dataset.

Since the format of the area of origin annotations are organised hierarchically, it seemed useful to visualise them as a tree, with each larger area expanding into smaller sub-areas.

Using some pre-baked javascript, I cobbled together a pannable, zoomable and interactively explorable tree.

Area tree with song names as leaf nodes.

This visualisation is based heavily on this Github gist.

Some related resources: